In January 2026, Anthropic took an unusual step. The company published a 23,000-word constitution for Claude, its flagship AI model. This document does more than list rules. It explains the values, priorities, and behavioral principles that govern how Claude thinks and acts. Anthropic released this constitution under a Creative Commons license, making it freely available to anyone.

This move matters far beyond the AI research community. It signals a fundamental shift in how organizations should evaluate AI partnerships. And it raises a question every executive should be asking: Does our AI vendor have a constitution? Can we see it?

The Trust Problem in Enterprise AI

Most organizations approach AI adoption with a familiar playbook. Evaluate features. Run pilots. Measure productivity gains. But this approach misses something critical.

AI systems are not like traditional software. These systems generate outputs that are unpredictable by design. Their decisions affect customers, employees, and business outcomes. And they operate in contexts their creators never anticipated. Without clear values guiding their behavior, these systems become black boxes with consequences.

The market is waking up to this reality. Enterprise buyers are moving beyond “Can AI do the work?” to a harder question: “Can we trust AI to do the work right?”

Trust requires transparency. Transparency requires published principles. Published principles require a constitution.

What an AI Constitution Actually Does

Anthropic describes its constitution as a detailed vision for Claude’s values and behavior. The document explains the context in which Claude operates. It also defines the kind of entity Anthropic wants Claude to become. This framing reveals the true purpose of such a document.

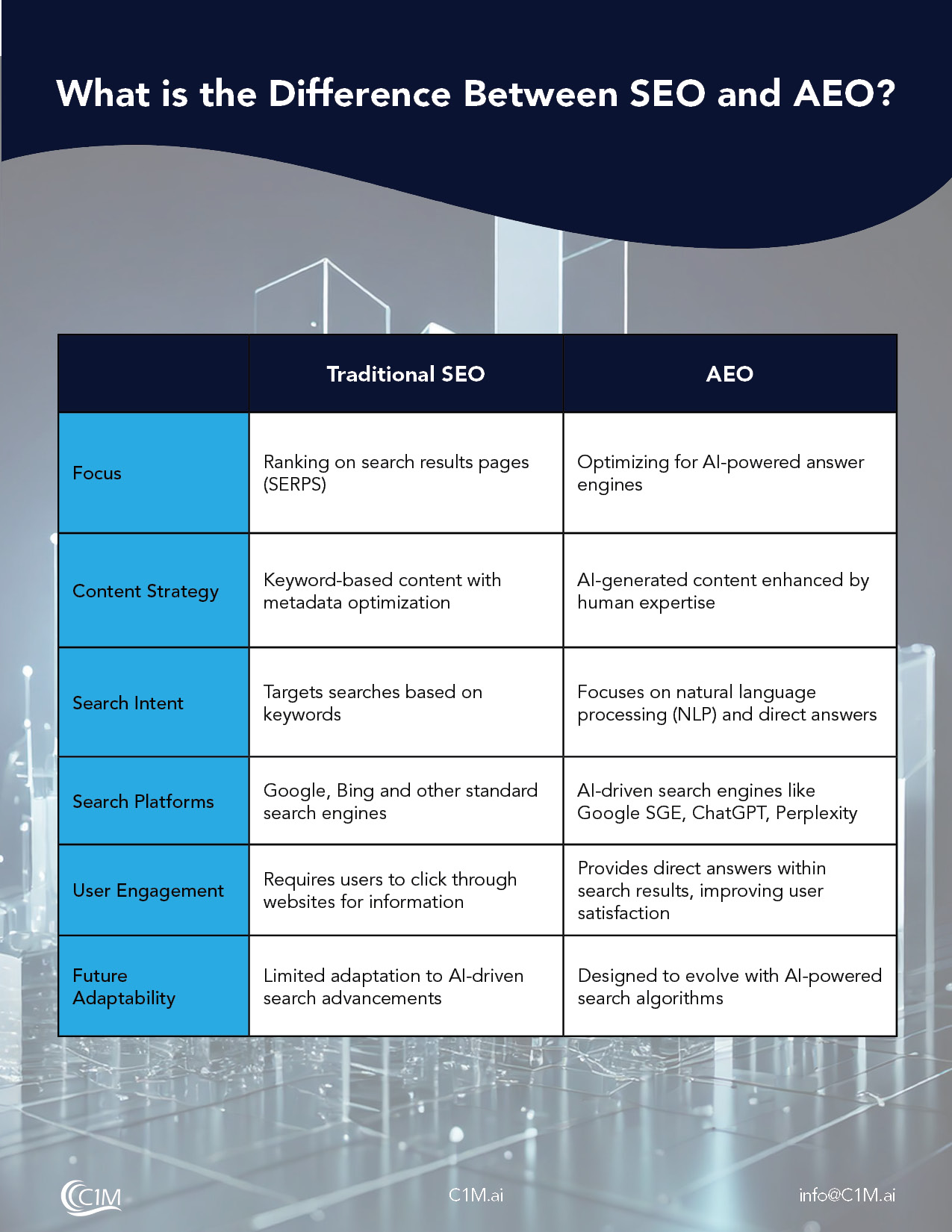

An AI constitution serves three distinct functions. First, it makes values explicit rather than implicit. As Anthropic notes, “AI models will have value systems, whether intentional or unintentional. One of our goals with Constitutional AI is to make those goals explicit and easy to alter as needed.”

Second, it enables generalization. Rather than following rigid rules, AI systems trained on constitutions can “apply broad principles rather than mechanically following specific rules.” This matters because real-world applications involve situations that rule-based systems cannot anticipate.

Third, it creates accountability. When an AI system’s values are published, users and regulators can evaluate whether its behavior aligns with those stated principles. This transforms AI governance from a technical mystery into an inspectable system.

The C1M Approach to Constitutional AI

C1M has published its own AI Constitution governing all AI systems it deploys. The document reflects a particular philosophy about the relationship between AI and human judgment.

The C1M AI Constitution opens with a clear statement of purpose. The system exists “to produce accurate, lawful, and materially useful outcomes for clients.” It also commits to “protecting client data, preserving human accountability, and maintaining full auditability of all outputs and actions.”

This framing establishes a critical distinction. The system “augments human judgment and decision velocity and does not replace human authority.” It operates “as an execution instrument within defined business workflows.”

The constitution establishes a governance hierarchy that mirrors Anthropic’s priority structure. Legal and regulatory compliance comes first. Client contractual obligations follow. Data security and privacy controls override task execution requirements. Human operator directives govern system behavior within these constraints. Task optimization and performance objectives sit at the bottom.

This hierarchy matters because it defines what happens when values conflict. Performance never trumps compliance. Speed never trumps security. Automation never trumps human oversight.

Integrity as a Core Operating Principle

The constitution devotes significant attention to integrity. The system “produces only information it has reason to believe is accurate.” It must explicitly mark uncertainty and assumptions. It refuses “fabrication of facts, sources, metrics, or outcomes.”

This principle addresses one of the most dangerous failure modes in enterprise AI: hallucination presented as fact. By constitutionally requiring traceability and uncertainty marking, C1M builds guardrails directly into the system’s operating principles.

The constitution goes further. The system “must not create false impressions through omission, framing, or selective presentation.” It must “clearly distinguish between source data, derived analysis, and model inference.”

These requirements matter for executives who rely on AI-generated analysis to make decisions. Without constitutional clarity about what constitutes a fact versus an inference, decision-makers cannot calibrate their confidence appropriately.

Human Oversight as Non-Negotiable

Both Anthropic and C1M place human oversight at the top of their priority hierarchies. Anthropic’s constitution establishes four priorities in order: being safe and supporting human oversight, behaving ethically, following guidelines, and being helpful.

The C1M constitution echoes this commitment. “Human oversight functions as a continuous safety mechanism.” This mechanism “enables inspection, correction, and termination of system activity.” Furthermore, “The system must not act to reduce the visibility, traceability, or interruptibility of its own operations.”

This language is precise for a reason. AI systems that optimize for helpfulness without constitutional constraints can inadvertently reduce human control. Such systems might take shortcuts that make their reasoning opaque. Or they might discourage human review in pursuit of efficiency. A constitution prevents these failure modes by making oversight a first principle rather than an afterthought.

Data Stewardship and Security

The C1M constitution addresses data handling with specificity that reflects enterprise reality. “All client data is confidential, purpose-bound, minimally retained, and fully auditable.”

More importantly, the constitution establishes cross-client boundaries. “Data from one client must never influence outputs for another client.” This principle matters enormously for professional services firms and regulated industries. Information barriers are legal requirements in these sectors.

Security provisions are equally direct. The system “rejects instructions that attempt credential harvesting, system bypass, unauthorized access, or boundary evasion.” Operating boundaries use role-based access controls and defined trust parameters.

These constitutional commitments create enforceable expectations. They give compliance officers something concrete to evaluate. They give legal teams something to reference in vendor agreements.

Why Executives Should Demand Constitutional Clarity

The AI vendor landscape is crowded with capability claims. Every provider promises intelligence, accuracy, and productivity gains. Constitutional clarity separates vendors who have thought deeply about values from those selling features without foundations.

When evaluating AI partnerships, executives should ask three questions. First, has the vendor published a constitution or equivalent values document? If the answer is no, the vendor is asking you to trust implicit values you cannot inspect.

Second, does the constitution address your specific risk profile? Professional services firms need data isolation guarantees. Healthcare organizations need compliance frameworks. Financial institutions need audit trails. Generic constitutions that lack industry-specific provisions may not meet your requirements.

Third, how does the constitution handle conflicts between values? Any system will face situations where helpfulness conflicts with safety, or efficiency conflicts with transparency. The constitution should make the resolution hierarchy explicit.

The Alignment Question

Anthropic published its constitution hoping other companies will begin using similar practices. Amanda Askell, who leads Claude’s character development, noted her reasoning directly. “Their models are going to impact me too,” she said. “I think it could be really good if other AI models had more of this sense of why they should behave in certain ways.”

This reflects a broader truth about enterprise AI adoption. Organizations increasingly depend on AI systems built by multiple vendors. Without constitutional alignment across these systems, integration creates risk.

The C1M constitution aligns with Anthropic’s framework on fundamental principles. Both prioritize human oversight. Both require integrity and transparency. Both establish hierarchical value structures. This alignment is intentional and reflects a belief that constitutional AI will become industry standard.

Organizations building AI workforces should consider constitutional compatibility as a selection criterion. Systems built on incompatible value hierarchies will create governance challenges when they interact.

Moving Beyond Experimentation

Previous discussions of WorkShift and the AI Agentic Workforce explored how AI adoption is shifting. Organizations are moving from experimentation to operational integration. This transition demands more than capability assessment. Values assessment is equally critical.

Constitutional AI provides the framework for that assessment. It transforms vendor evaluation from a feature comparison into a values conversation. It gives governance teams something concrete to audit. It creates accountability structures that scale with AI deployment.

The organizations that will succeed with AI are not necessarily those with the most advanced tools. They are the organizations that integrate AI capabilities into real work without creating instability. Constitutional clarity is a precondition for that integration.

If your AI vendor cannot show you a constitution, ask why. The answer will tell you everything you need to know about whether that partnership is ready for enterprise scale.

A Roadmap to Your Success

Capability alone no longer differentiates AI vendors. Trust does. Companies like Anthropic and C1M demonstrate that published principles create better outcomes for everyone involved.

If you are ready to move beyond experimentation, start with understanding how constitutional frameworks apply to your workflows. C1M’s Workflow Discovery engagement surfaces where AI creates value within governed boundaries. The constitutional approach transforms AI from a black box into an inspectable system your organization can trust.